Table of Contents

- What Did Google Actually Update?

- Why This Update Matters for SEO

- Does This Affect Your Website?

- Why Technocrackers Clients Are Safe

- How Googlebot Crawling Works (Simplified)

- SEO Risks of Large HTML Pages

- Best Practices to Stay Crawl-Optimized

- 1. Keep HTML Clean and Lightweight

- 2. Load Scripts Asynchronously

- 3. Prioritize Content in HTML Source

- 4. Avoid Heavy Page Builders

- 5. Optimize Media and Lazy Load Assets

- How This Impacts AI Search, LLMs & AI Overviews

- Does This Affect PDFs and Downloads?

- What Should Website Owners Do Now?

- How Technocrackers Builds Google-Compliant Websites

- Key Takeaways

- Final Thoughts

Google recently updated its official documentation clarifying how much content Googlebot crawls from different file types. While social media headlines suggested that Google reduced crawl limits for webpages to 2MB, that information is incorrect. The real update confirms long-standing limits — and most websites are not affected.

At Technocrackers, we already build websites using lightweight, performance-first architecture that aligns with Google’s crawling and indexing standards. This update simply reinforces best practices we already follow — and importantly, existing Technocrackers clients are not impacted by this change.

In this guide, we’ll break down what Google actually updated, what it means for SEO, and how to future-proof your website.

What Did Google Actually Update?

According to Google’s documentation and reporting by Search Engine Land, Googlebot crawl limits are:

| File Type | Crawl Limit |

| HTML web pages | 15MB |

| Supported file types (non-HTML) | 2MB |

| PDF files | 64MB |

This means:

- Google crawls the first 15MB of an HTML page

- Google crawls 2MB of supported file types

- Google crawls 64MB of PDF documents

There was no reduction to HTML crawl limits. Google simply clarified its documentation.

Why This Update Matters for SEO

Even though most websites are well under 15MB, this update highlights a growing focus on:

- Efficient crawling

- Page performance

- Clean code structure

- Content prioritization

Googlebot prioritizes the top portion of your page source — including:

- Headings

- Primary content

- Internal links

- Structured data

- Meta tags

If your site is bloated with excessive scripts, inline CSS, tracking pixels, or heavy page builders, important SEO signals could be pushed lower in the HTML — risking partial crawling.

Does This Affect Your Website?

For most websites, the answer is no.

Modern pages typically range between:

- 200KB to 2MB (HTML)

- Far below Google’s 15MB crawl threshold

Only extremely heavy websites with:

- Excessive JavaScript bundles

- Massive inline stylesheets

- Poorly optimized builders

- Multiple embedded tracking scripts

…could approach crawl inefficiencies.

Why Technocrackers Clients Are Safe

At Technocrackers, every website is built using:

- Lightweight HTML output

- Optimized CSS and JS loading

- Performance-first page architecture

- SEO-friendly internal linking

- Clean DOM structure

- Core Web Vitals compliance

Because of this, all existing Technocrackers clients remain fully compliant with Google’s crawling standards — and this documentation update does not negatively affect their rankings, indexing, or visibility.

In fact, our development and SEO standards already exceed Google’s crawl efficiency expectations.

How Googlebot Crawling Works (Simplified)

When Googlebot fetches a page:

- It downloads the HTML source

- Parses key content and links

- Discovers new URLs

- Renders the page (if needed)

- Indexes meaningful content

If the HTML exceeds crawl limits (rare), Googlebot:

- Stops processing beyond the threshold

- May miss internal links

- May skip structured data

- Could ignore lower-priority content

That’s why clean structure matters more than size alone.

SEO Risks of Large HTML Pages

While most sites won’t hit 15MB, bloated pages can still cause:

- ❌ Reduced crawl efficiency

- ❌ Delayed indexing

- ❌ Poor Core Web Vitals

- ❌ JavaScript rendering issues

- ❌ Lower content visibility

This is especially true for:

- Ecommerce websites

- SaaS platforms

- Builder-heavy WordPress themes

- Script-heavy marketing pages

Best Practices to Stay Crawl-Optimized

Here’s what Technocrackers follows — and what every site should implement:

1. Keep HTML Clean and Lightweight

Avoid inline scripts and excessive div nesting. Clean DOM = better crawling.

2. Load Scripts Asynchronously

Use defer and async for JavaScript to avoid blocking render.

3. Prioritize Content in HTML Source

Ensure:

- H1-H3 headings

- Primary text

- Key internal links

- Schema markup

- Appear early in the DOM.

4. Avoid Heavy Page Builders

Many builders inflate HTML size unnecessarily.

5. Optimize Media and Lazy Load Assets

Images, videos, and embeds should load only when needed.

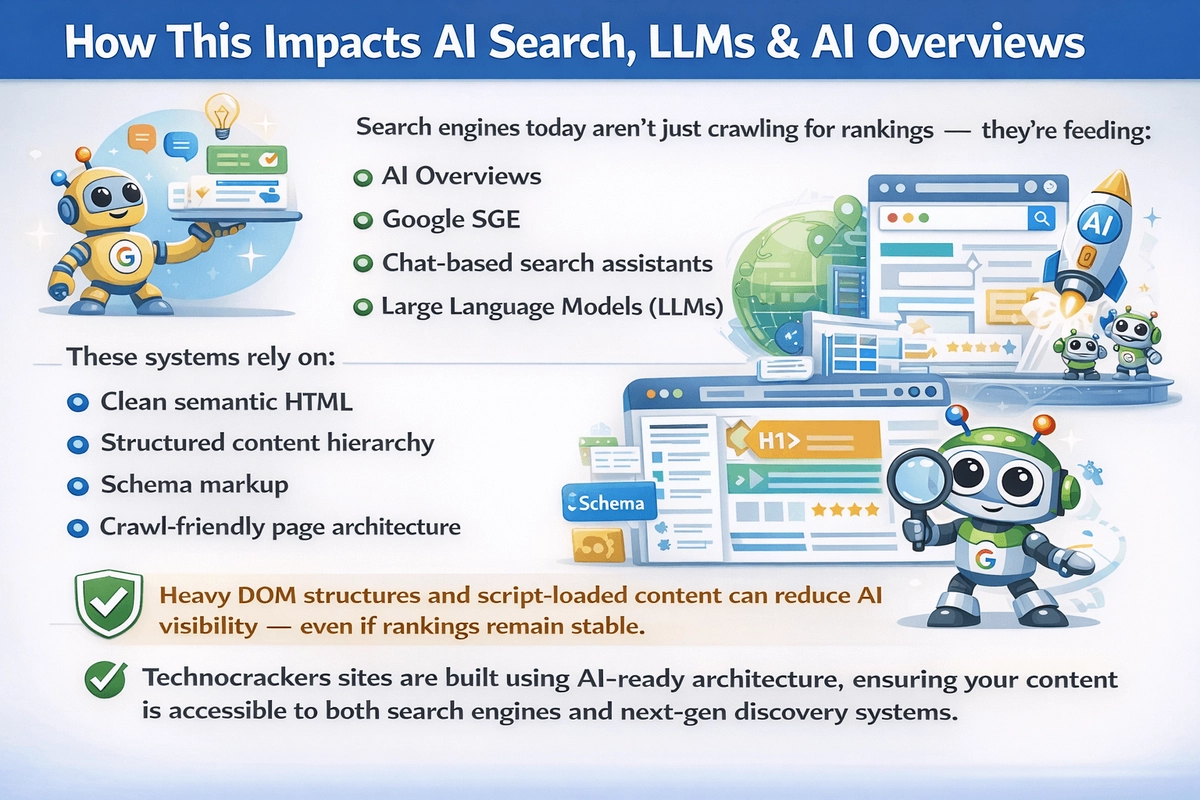

How This Impacts AI Search, LLMs & AI Overviews

Search engines today aren’t just crawling for rankings — they’re feeding:

- AI Overviews

- Google SGE

- Chat-based search assistants

- Large Language Models (LLMs)

These systems rely on:

- Clean semantic HTML

- Structured content hierarchy

- Schema markup

- Crawl-friendly page architecture

Heavy DOM structures and script-loaded content can reduce AI visibility — even if rankings remain stable.

Technocrackers sites are built using AI-ready architecture, ensuring your content is accessible to both search engines and next-gen discovery systems.

Does This Affect PDFs and Downloads?

Yes — but only for non-HTML formats.

Google crawls:

- First 64MB of PDFs

- First 2MB of other supported file types

So large brochures, catalogs, whitepapers, and downloadable content should:

- Stay under recommended limits

- Be structured clearly

- Include crawlable text layers

- Avoid excessive embedded media

Technocrackers ensures all document assets remain crawl-optimized.

What Should Website Owners Do Now?

For most businesses:

👉 Nothing

But if you want to stay future-proof:

- Run HTML size audits

- Improve page speed scores

- Reduce JavaScript bloat

- Optimize internal linking

- Improve crawl efficiency

- Prioritize semantic content structure

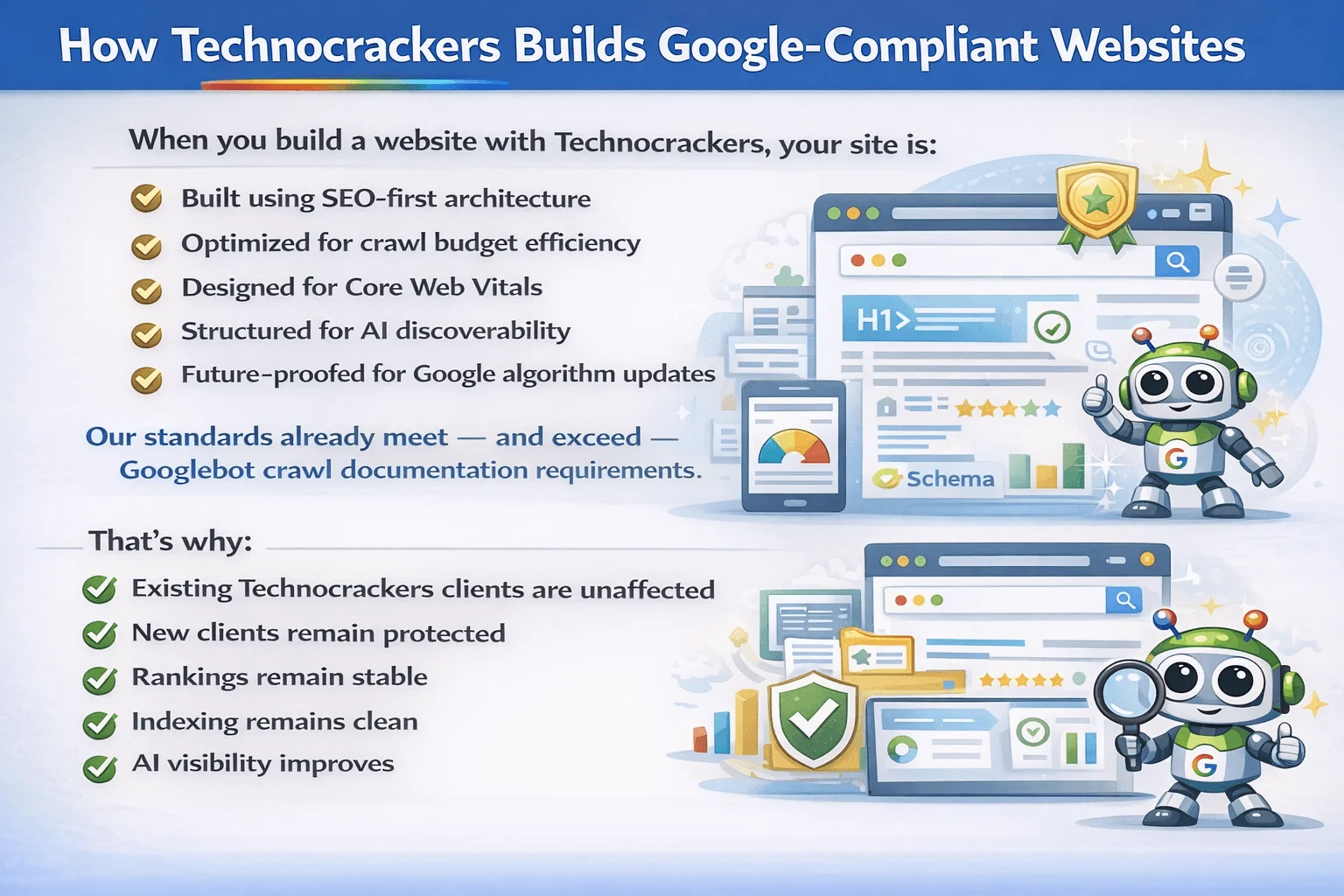

How Technocrackers Builds Google-Compliant Websites

When you build a website with Technocrackers, your site is:

- Built using SEO-first architecture

- Optimized for crawl budget efficiency

- Designed for Core Web Vitals

- Structured for AI discoverability

- Future-proofed for Google algorithm updates

Our standards already meet — and exceed — Googlebot crawl documentation requirements.

That’s why:

- Existing Technocrackers clients are unaffected

- New clients remain protected

- Rankings remain stable

- Indexing remains clean

- AI visibility improves

Key Takeaways

- Google did not reduce HTML crawl limits to 2MB

- HTML pages still have a 15MB crawl allowance

- Only non-HTML files are limited to 2MB

- PDFs remain crawlable up to 64MB

- Most websites are not impacted

- Technocrackers-built sites already follow best practices

Final Thoughts

This update isn’t a warning — it’s a reminder: performance, structure, and crawl efficiency matter more than ever in both SEO and AI-driven search.

At Technocrackers, we build websites that:

- Load fast

- Rank higher

- Crawl efficiently

- Scale safely

- Perform in AI search

If you want a high-performing, future-ready website built for SEO, speed, conversions, and long-term growth. Request a free website consultation and quote with Technocrackers today.